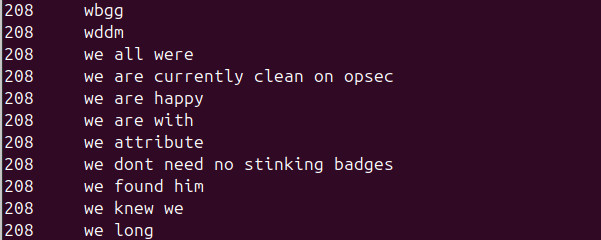

I have once again updated the phraser word and phrase lists.

They use fresher data from Wikipedia, fan wikis, crossword constructors, and the chat transcript from Houthi Small Group;

so if some future puzzles solve to the phrase "we are currently clean on opsec," you'll be ready.

Permalink

2025-07-09T17:16:35.264808

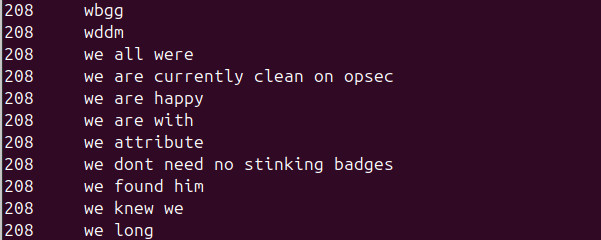

Today, I walked to El Polin Spring in San Francisco's Presidio

and looked in a hidden Field Note. Thus I completed ⅒ of one

of 22 Fun Things to do in your National Park. While in the area,

I glimpsed a hummingbird, and completed an entire Fun Thing to do

in your National Park.

![the box, open, reveals some interpretive text about berries: Summertime is well known for berries and the berries we eat all come from wild ancestors. Around El Polin Spring there are many native berries: elderberries (tiny red clusters), thimbleberries (large soft leaves), snowberries (white), twinberries, wild strawberries, California blackberries and more. Even poison oak has berries! See how many different kinds you can notice. [Note: Not all berries are safe for humans to eat!]](https://lahosken.san-francisco.ca.us/importable/2025/field-notes-open.jpg)

I was following a map, but really following my memory.

The Presidio Trust publishes an activity map for kids, Adventures in the Presidio.

Page 2 of that linked pdf is a map encouraging you to find Hidden Field Notes (inside wooden blocks perched on fence posts and stumps). There was a little picture of what to look for: a little

box held together by a hinge. When I saw that, I realized I'd already spotted one of

those Hidden Field Notes, near El Polin Spring. The activity map had a box with a note: "glimpse a hummingbird at El Polin Spring".

I leaped to the conclusion: This map was showing me where to find Hidden Field Notes. Or maybe geocaches; somewhere else on the map, it mentioned geocaches.

This seemed kinda sketchy; if I hadn't already known about this little box in El Polin Spring, that would have been a darned large area to search; the map location

wasn't even that close to real thing, seeming to indicate a place west of Inspiration Point. Some friends of my mom tried to use this map to find a box north of

Mountain Lake Park; it didn't go well; the map seems to suggest you should hop over a padlocked gate and walk along an unused, overgrown dirt road that's more of a

gully than a road in some places.

Now that I'm home, I looked over the map more closely and I think I figured it out.

Hey mom, tell your friends: the "map" doesn't show wooden boxes. It's basically an activity list sketched onto a map.

You aren't expected to go to the places marked on the map; that's good since some are in the Pacific, on a golf course,

on private property…

There is a separate map of the wooden boxes, a.k.a. Field Notes: Behold the map. The map activity list also mentions GeoCaches;

the Presidio does have some GeoCaches, but they're not shown on this map activity list. There's a geocache

on the north side of Mountain Lake Park, but not near the mark on the map activity list. (That geocaching.com site I linked to has maps; you need to have

an account and be logged in to see them.)

A clearer, albeit much-less-pretty guide to kids' activities in the Presidio: Self-Guided Adventures.

Permalink

2025-07-05T21:17:13.401139

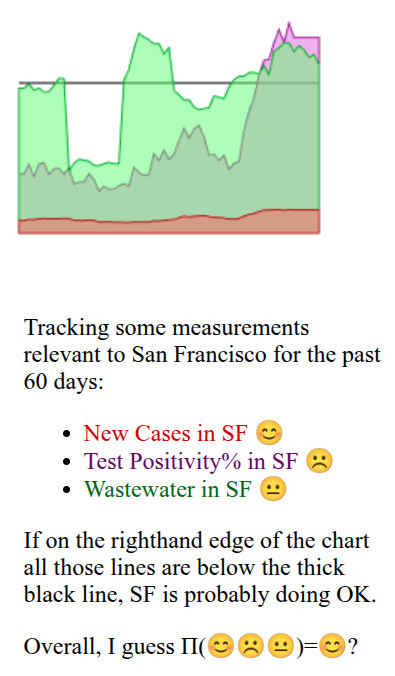

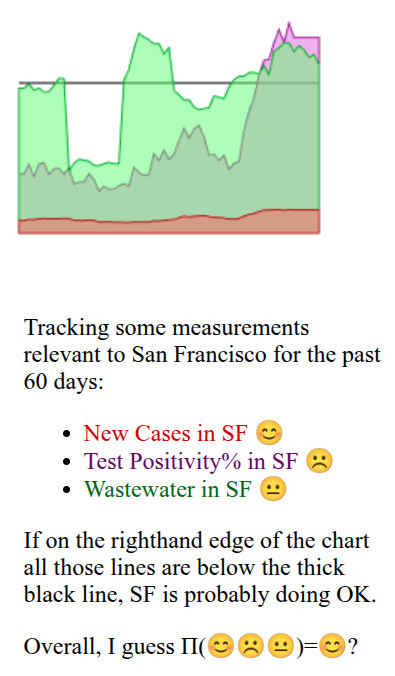

I continue to check my little dashboard of #SanFrancisco COVID numbers each morning to figure

out whether visiting the comic book shop in person is a nice excuse for an errand or the moment

my doctor will pinpoint, asking "You gave yourself long-term heart problems by picking up

a funnybook about a barbarian with a talking axe?" Lately, one of the numbers I track has whooshed

up from pretty-safe to not-so-safe, so that now ⅔ of the numbers I track are not-so-safe:

San Francisco's COVID test positivity recently went up steeply…and then slowed down.

Maybe it's peaking and will fall again? (That would be nice.)

Maybe it's just pausing a bit before zooming up again? (I hope not.)

I'm still doing indoor errands. But I remember at least one person mostly made their

go/no-go decisions based on % test positivity; I bet they're staying home these days.

And people who make go/no-go decisions based on wastewater data have maybe been staying

home for a while.

Permalink

2025-07-03T15:55:30.010323

Arty things I saw on my #SanFrancisco walk this morning:

Cement infrastructure for the "Naga" sea serpent statue going in at Rainbow Falls. The statue is sinuous but it's easier to make rectangular cement forms. So…depending on whether you're near or far, you might think this thing was gonna be all right angles or serpentine. (I walked up the path to the creepy Celtic cross so I could take the far picture, please clap)

Well Done Signs sign painter's van with woodpecker logo promising "Always Hand Painted." (Looking at the web site, I guess this van is visiting from Portland, OR.)

Sidewalk chalk art at 20th and Irving (alas, pretty scuffed by the time I got there) asks a very American question: Pay your bills or buy your pills?

Permalink

2025-06-29T16:29:30.959040

A swimming pool develops a crack. A mother develops dementia. Everything you ever cared about, everyone you ever cared about will wither, decay, and fade away. You will wither, decay, and fade away. One character in the book, a swimmer, says "Everything is loss." She wasn't wrong; piece by piece, it all vanishes. I loved this book and recommend it and say: Brace yourself. I also noted the passage "And the time that your other brother, the lawyer, went after her boss, Dr. Nomura, when he tried to cheat her out of her 401(k)…." If that's based on a true story, then I'm glad that brother knew what to do.

Permalink

2025-06-22T00:00:16.753381

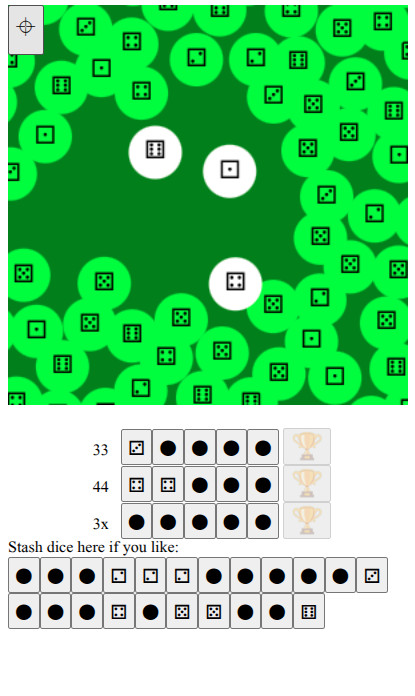

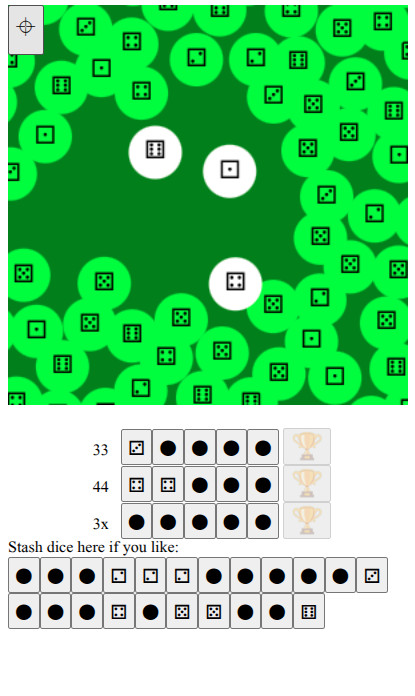

As I walk around the city for exercise and errands,

I like to play walking-around games on my phone: games

that that use GPS* to move my little guy around in

the game. I just wrote a new such game. It runs in a

web page: Walkzee.

(Because Apple hates the web, if you visit

Walkzee on your iPhone and you, like 99.9% of iPhone

users have the default web browser and haven't turned

on the enable geolocation setting, the game will do

nothing.)

I'm still tweaking the game. I play it as I walk around;

then sit down and fix bugs when I get home. As of today,

it might be of more interest to Mystery Hunt players than

walkers: there are no instructions, so half the challenge

is figuring out how to play.

Why am I writing yet-another walking-around phone game?

The previous such game I wrote ran on top of Google Cloud Services.

You might remember

a few months back, I switched my backups

to not use Google servers, run by a will-abet-genocide-for-$$$

division within Google. Some days ago, I wondered: "Why is Google

still billing me?" My little walking-around game

didn't make Google's servers think very hard—it almost

squeaked under the threshhold to run for free. But it was a little over,

and thus Google was billing me.

I wrote this new game so that it does all of its thinking and

data storage on the phone, not on some server elsewhere on the net.

Then I shut down my old game that was running on Google's computers.

Now I won't send money directly to Google's pro-genocide division.

This means giving up some server-y features. In the old game, when

my phone broke, my score was still saved on some Google machine; so when

I got a new phone and resumed play, my big ol' score showed up. In this new

game, if I get a new phone, I'll have to start all over building up

my score. <sarcasm>oh no…</sarcasm>

(If you

do care about scores and furthermore you read this blog

mostly because you're into puzzles, have you tried

https://huzzlepub.com/?

At Huzzlepub, you can

paste in your daily Wordle, Toddle, Raddle,… uh, all

those daily puzzles, you can paste in that "share your score with

your friends" thingy, and it will let you compare your scores with your

peers, thus reminding you that Tyler Hinman is still better at crosswords

than you are.)

No, I don't think this will change Google's behavior. I'm imagining

some Google exec: "We were willing

to toss out 'Don't be evil' to get that $$$ military contract, but Larry

shut down his app and that little app pays for a cup of coffee every

four months, so—uh, make that five months, I want to leave a

decent tip or else the barista will spit in my drink." Yeah, no, this

isn't changing anything important, aside from me being able to look myself

in the mirror; well, OK, that's kinda important.

*Yes, I mean geolocation using GPS and other means.

Permalink

2025-06-16T13:41:07.929880

It's a history of the struggle to build and inhabit

public housing on the white side of Yonkers, NY, USA in the 1990s.

(If that sounds familiar but you're sure you didn't read

the book, maybe you saw the HBO miniseries?)

It was a pretty interesting, albeit aggravating read.

Why aggravating?

Yonkers' NIMBYs were national-news-level notable. In Yonkers,

they were very stubborn. Ordered by a judge to build

public housing on the east side of town, city politicians got

elected by promising to just not build that housing. Faced with

fines, they stuck to their guns. When the fines threatened to bankrupt

the city, when the city had to fire workers, when…

Their appeal went up to the US Supreme Court.

The book isn't written from the NIMBY point of view.

I suppose the NIMBYs didn't want to sit down with the

author and recount their tales of hurling racist epithets

back in the good ol' days. But you feel their presence in

every chapter: Voting out the sane politicians; making death

threats; hurling those epithets.

Instead we hear about the ousted Yonkers politicians and

the outside-Yonkers politicians who were trying to figure

out how to make the city comply. Do you fine the city?

Seems bad to make city workers lose their jobs just because

the city council got taken over by racists. Do you fine

the city council members? They can declare bankruptcy, and

then get re-elected by pointing out their sacrifices.

And we hear about the people who moved into the new public

housing when it was finally built. None of these people

thought of Ruby Bridges like Yep, that's the

lifestyle I'm looking for. But they had to learn how to

get along with their new neighbors, how to stand up for

themselves. There are some real moments of inspiration in

there.

Permalink

2025-06-11T14:06:03.061026

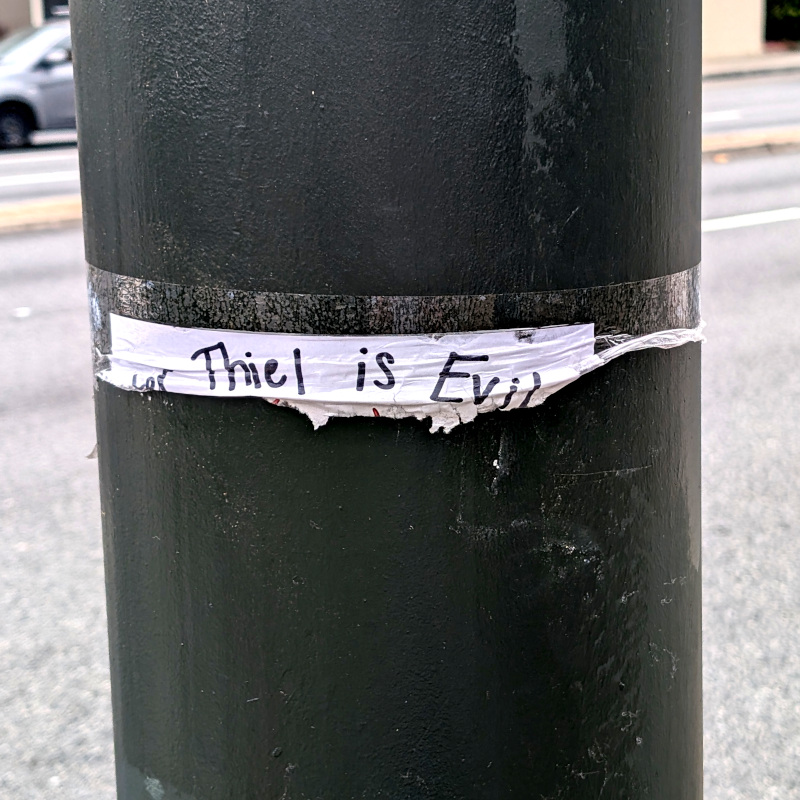

Some photos walking around #SanFrancisco 's Marina District (and the bus ride back home):

- Someone tried to tear down a flier, but instead just spotlighted the important part of the message

- Some tentacles atop an entrance of the Palace of Fine Arts turned out to be part of a travelling exhibit of balloon art.

As every 12-year-old San Franciscan knows, you can call it the Palace of F. Arts for short, which raises the question:

How did they fill all those balloons?

- A new-to-me graffito on the wall above the old Lucky Penny at Geary+Masonic. Sorry for the awkward angle; I snapped this out the bus window as we rolled past

Permalink

2025-06-09T00:53:32.936325

Still wrapping my head around the new reality: My cousin quit his job with the federal government and went to work at a startup, thus increasing his job security.

Permalink

2025-06-07T17:00:00.099403

This SMRT COW "smart cow" smart car impressed me. Yep, that's a nose ring.

[Update: When I saw this car again in late June, it no longer had

the Texas SMRT COW license plate, but a message-less Calfornia plate.]

Permalink

2025-06-27T19:52:32.539409

![the box, open, reveals some interpretive text about berries: Summertime is well known for berries and the berries we eat all come from wild ancestors. Around El Polin Spring there are many native berries: elderberries (tiny red clusters), thimbleberries (large soft leaves), snowberries (white), twinberries, wild strawberries, California blackberries and more. Even poison oak has berries! See how many different kinds you can notice. [Note: Not all berries are safe for humans to eat!]](https://lahosken.san-francisco.ca.us/importable/2025/field-notes-open.jpg)